Demonstration Session Information

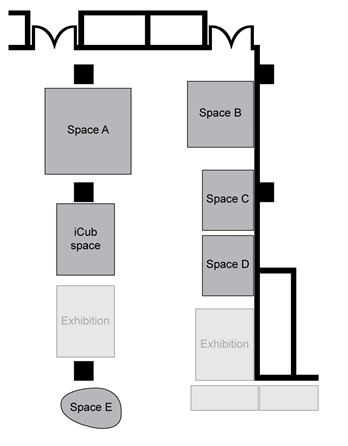

Demonstrations will be shown on the exhibition floor at the Hilton convention center (Golden Gate Ballroom, lobby level), next to the multimedia sessions. A PDF version showing barriers is available here.

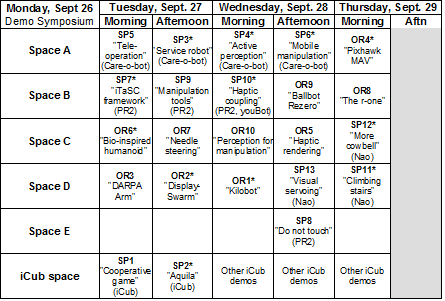

Schedule

Morning: 9 am - 11:30 am

Afternoon: 1 pm - 3:30 pm

List of Demonstrations

Standard Platform Demonstrations:

A number of standard platforms for mobility and manipulation in robotics research now exist, as both off-the-shelf commercial products, and research platforms with wide distribution. Several manufacturers have made available their platforms for demonstration. There are two benefits to this arrangement: demonstrators will not have to transport hardware, and the demonstrations will show the increasing use of standard hardware as a means of attacking advanced applications. The accepted demonstrations for this session are:

SP1 – iCub Learning a Cooperative Game through Interaction with his Human Partner

Stephane Lallee, Ugo Pattacini, Lorenzo Natale, Giorgio Metta, Peter Ford Dominey

Stem Cell and Brain Research Institute, Bron, France, Italian Institute of Technology, Genoa, Italy

Platform: iCub

Abstract: A human can interact with the iCub by pointing and speaking together in order to learn, name and play with unknown objects on a table. User can then ask for more complex actions and “program” small games by instructing the robot with a shared plan.

SP2 – Aquila - The Cognitive Robotics Toolkit (*)

Martin Peniak, Anthony F. Morse

University of Plymouth, UK

Platform: iCub

Abstract: A live demonstration of Aquila, an open source cognitive robotics software toolkit for the iCub robot. This demonstration includes modeling sensorimotor learning in child development, action acquisition, and robot teleoperation.

SP3 – General Purpose Service Robot (*)

Nico Hochgeschwender, Jan Paulus, Michael Reckhaus, Frederik Hegger, Christian A. Mueller, Sven Schneider, Paul G. Ploeger, Gerhard K. Kraetzschmar

Bonn-Rhein-Sieg University of Applied Sciences, Bonn, Germany

Platform: Care-O-Bot 3

Abstract: In our demonstration we show a general purpose service robot which performs various tasks on demand.

SP4 – Active Perception Planning on the Care-o-Bot platform (*)

Robert Eidenberger, Michael Fiegert, Georg von Wichert, Gisbert Lawitzky

Siemens, Munich, Germany

Platform: Care-O-Bot 3

Abstract: The Care-O-Bot platform localizes household objects in complex environments and grasps them. Environment perception and active perception planning is demonstrated and used for efficient scene modeling.

SP5 – Semi-autonomous Tele-Operation Interface for Robotic Fetch and Carry Tasks

Renxi Qiu1, Alexandre Noyvirt1, Nayden Chivarov2, Rafael Lopez3, Georg Arbeiter4, Dayou Li5

1Cardiff Univ., UK; 2Bulgarian Academy of Science, Bulgaria; 3Robotnik Automation, Spain; 4Fraunhofer IPA, Germany; 5Univ. of Bedfordshire, UK

Platform: Care-O-Bot 3

Abstract: The demonstration focuses on remote user interface such as Apple IPAD to tele-operate robot semi-autonomously. It enables non-expert users taking charge of the robot around the home.

SP6 – Using Mobile Manipulation to solve a Household Task (*)

Florian Weisshardt, Alexander Bubeck, Jan Fischer, Ulrich Reiser

Fraunhofer IPA, Stuttgart, Germany

Platform: Care-O-Bot 3

Abstract: The task of serving objects is significantly scalable and reaches from table top manipulation of simple objects to retrieving objects that require mobile manipulation of the environment first.

SP7 – Demonstration of iTaSC as a unified framework for task specification, control, and coordination for mobile manipulation (*)

Dominick Vanthienen, Tinne De Laet, Markus Klotzbuecher, Ruben Smits, Wilm Decré, Koen Buys, Steven Bellens, Herman Bruyninckx, and Joris De Schutter

Katholieke Universiteit Leuven, Belgium

Platform: PR2

Abstract: Mobile co-manipulation task of a PR2 and a human, to illustrate the potential of the iTaSC method and its Orocos implementation to specify sensor-based, multi-frame, partially-specified robot tasks.

SP8 – Please (Do Not) Touch the Robot

Joseph M. Romano, Katherine J. Kuchenbecker

University of Pennsylvania, Philadelphia, USA

Platform: PR2

Abstract: Natural human-robot interaction requires a tight coupling between sensing and control. Our PR2 demo use high-bandwidth tactile signals to showcase dynamic interactive behaviors such as high-fives.

SP9 – Interactive Manipulation Tools for the PR2 Robot

Matei Ciocarlie, Kaijen Hsiao

Willow Garage, Menlo Park, USA

Platform: PR2

Abstract: Interactive manipulation tools for the PR2: everything from low-level tools such as moving grippers around in Cartesian space, to high-level tools such as grasping segmented or recognized objects.

SP10 – Haptic coupling with augmented feedback between the KUKA youBot and the PR2 robot arms (*)

Steven Bellens, Koen Buys, Nick Vanthienen, Tinne De Laet, Ruben Smits, Markus Klotzbuecher, Wilm Decré, Herman Bruyninckx, and Joris D Schutter

Katholieke Universiteit Leuven, Belgium

Platform: PR2 and youBot

Abstract: We demonstrate haptic coupling between the KUKA youBot and the PR2 robot arms. A wearable display provides the operator with PR2 camera images, his head movement is coupled with the PR2's head.

SP11 – Detecting and Climbing Stairs with a Laser-equipped Nao Humanoid (*)

Stefan Osswald, Armin Hornung, Maren Bennewitz

University of Freiburg, Germany

Platform: Nao

Abstract: We demonstrate autonomous stair climbing based on laser and vision data. After collecting a 3D laser scan, Nao detects steps of a staircase and climbs the staircase using visual information.

SP12 – More cowbell! A musical ensemble with the NAO thereminist (*)

Angelica Lim, Takeshi Mizumoto, Takuma Otsuka, Tatsuhiko Itohara, Kazuhiro Nakadai1,2, Tetsuya Ogata, Hiroshi G. Okuno

Kyoto University; 1Honda Research Institute, Wako, Japan; 2Tokyo Institute of Technology, Japan

Platform: Nao

Abstract: We present the first live performance of our interactive music robot: Nao plays the theremin and listens to humans to stay in sync. Demo attendees are invited to join by playing cowbell or maracas.

SP13 – Model-based Visual Servoing Tasks on Nao Robot

A. Abou Moughlbay1, J. J. Sorribes2, E. Cervera2, P. Martinet1

1LASMEA, Blaise Pascal University, France

2RobInLab, Jaume-I University, Spain

Platform: Nao

Abstract: Model-based visual servoing is implemented on the Nao robot. Based on geometric models, the robot performs localization, tracking and grasping of objects in a semi-structured environment.

Open Research Demonstrations:

The goal of the open research demonstration session is to give participants an open forum to present their latest developments on robotic systems and software. The accepted demonstrations for this session are:

OR1 – Kilobot: A Low Cost Scalable Robot System for Collective Behaviors (*)

Michael Rubenstein, Radhika Nagpal

Harvard University, Cambridge, USA

Abstract: The Kilobot is a low cost, easy to use robot, which is designed for testing swarm algorithms on hundreds to thousands of robots. We will demo robot operations, and sample behaviors on 100 Kilobots.

OR2 – DisplaySwarm: A robot swarm displaying images (*)

Javier Alonso-Mora1,2, Andreas Breitenmoser1, Martin Rufli1, Stefan Haag, Gilles Caprari3, Roland Siegwart1, Paul Beardsley2

1ETH Zurich; 2Disney Research Zurich; 3CGTronic Lugano, Switzerland

Abstract: DisplaySwarm represents images in a novel way using small mobile robots with controllable colored illumination. The work addresses research questions in collision avoidance and pattern formation.

OR3 – The DARPA ARM Robot: Available at Your Desk

Gill Pratt1, Jim Pippine2, Andrew Mor3, Natalie Salaets4

1DARPA DSO; 2Golden Knight Technologies; 3RE2, USA; 4System Planning Corporation, USA

Abstract: The DARPA ARM demonstration features both the publicly available ARM robot simulator and a real remote robot. Visitors interact with the simulator to perform various tasks, then watch the real remote robot do the same tasks.

OR4 – Flying the Pixhawk MAV - A Computer Vision Controlled Quadrotor (*)

Lorenz Meier, Petri Tanskanen, Lionel Heng, Gim-Hee Lee, Friedrich Fraundorfer, Marc Pollefeys

ETH Zurich, Switzerland

Abstract: The Pixhawk micro air vehicle is an autonomous flying robot equipped with cameras and onboard computer. It will fly based on onboard visual localization and perform the task of object tracking and pattern recognition.

OR5 – Proxy Method for Fast Haptic Rendering from Time Varying Point Clouds

Fredrik Ryden, Sina Nia Kosari, Howard Jay Chizeck

University of Washington, Seattle, USA

Abstract: This demonstration will allow users to directly “feel” physical objects imaged by a Kinect. They will be able to directly experience haptic rendering of both fixed and moving objects in real-time.

OR6 – Bio-inspired vertebral column, compliance and semi-passive dynamics in a lightweight humanoid robot (*)

Olivier Ly, Matthieu Lapeyre, Pierre-Yves Oudeyer

LABRI-INRIA, Talence, France

Abstract: We demonstrate the use of compliance and vertebral column in humanoid locomotion.

OR7 – A Robotic System for Needle Steering

Ann Majewicz1, John Swensen2, Tom Wedlick2, Kyle Reed3, Ron Alterovitz4, Vinutha Kallem5, Wooram Park6, Animesh Garg, Gregory Chirikjian2, Ken Goldberg7, Animesh Garg7, Noah Cowan2, and Allison Okamura1

1Stanford Univ.; 2John Hopkins Univ.; 3Univ. of South Florida; 4University of North Carolina at Chapel Hill; 5Univ. of Pennsylvania; 6Univ. of Texas at Dallas, USA; 7UC Berkeley;

Abstract: A live demonstration of robotic needle steering in artificial tissue, as well as videos and posters about models and simulations, path planners, controllers, and integration with medical imaging.

OR8 – The r-one: A Low-Cost Robot for Research, Education, and Outreach

A. Lynch, J. McLurkin

Rice University, Houston, USA

Abstract: We present the r-one: An advanced, low-cost robot for research and education. Come see autonomous multi-robot exploration, drive a mini-swarm, and write your program at programming stations.

OR9 – Ballbot Rezero

Simon Doessegger, Peter Fankhauser, Corsin Gwerder, Jonathan Huessy, Jerome Kaeser, Thomas Kammermann, Lukas Limacher, Michael Neunert, Francis Colas, Cedric Pradalier, Roland Siegwart

ETH Zurich, Switzerland

Abstract: Ballbots are able to balance and drive on a single sphere. Designed for high agility, organic motion and human interaction, Ballbot Rezero exploits its inherent, unstable dynamics.

OR10 – Generic Perception for Manipulation

Manuel Brucker1, Chavdar Papazov2, Simon Leonard3, Darius Burschka2, Gregory D. Hager3, Tim Bodenmueller1

1DLR, Germany; 2TU Munich, Germany; 3John Hopkins University, Baltimore, USA

Abstract: Our scene parsing algorithm leverages physical constraints to enhance data fitting over time and space. Our demonstration will show a robot manipulating complex scenes composed of various objects.

Symposium

A special symposium, Robot Demonstrations, will highlight 12 of the best demo proposals, with short descriptions of the demos by the authors.

When: Monday, September 26, 4pm

Where: Continental Parlor 7

List of talks:

SP2 – Aquila - The Cognitive Robotics Toolkit

SP3 – General Purpose Service Robot

SP4 – Active Perception Planning on the Care-o-Bot platform

SP6 – Using Mobile Manipulation to solve a Household Task

SP7 – Demonstration of iTaSC as a unified framework for task specification, control, and coordination for mobile manipulation

SP10 – Haptic coupling with augmented feedback between the KUKA youBot and the PR2 robot arms

SP11 – Detecting and Climbing Stairs with a Laser-equipped Nao Humanoid

SP12 – More cowbell! A musical ensemble with the NAO thereminist

OR1 – Kilobot: A Low Cost Scalable Robot System for Collective Behaviors

OR2 – DisplaySwarm: A robot swarm displaying images

OR4 – Flying the Pixhawk MAV - A Computer Vision Controlled Quadrotor

OR6 – Bio-inspired vertebral column, compliance and semi-passive dynamics in a lightweight humanoid robot

Organization

Kai O. Arras

Social Robotics Lab

University of Freiburg, Germany

Oliver Brock

Robotics and Biology Laboratory

TU Berlin, Germany

Kurt Konolige

Willow Garage

Menlo Park, CA, USA

Luis Sentis

Department of Mechanical Engineering

University of Texas at Austin, USA

For further questions contact the organizers through

This e-mail address is being protected from spambots. You need JavaScript enabled to view it

|